entity-fishing is an open source tool dedicated to the automatic identification and disambiguation of Wikidata entities in multilingual text and PDF documents. The tool is based on machine-learning techniques (Gradient Tree Boosting, CRF, word and entity embeddings) exploiting Wikipedia as training source.

entity-fishing offers high performance, scalability and is totally generic in term of domains and languages. It can thus address a large variety of usages. Our usage focuses more particularly on processing technical and scientific documents, taking advantage of the massive amount of scientific knowledge and links present in Wikidata.

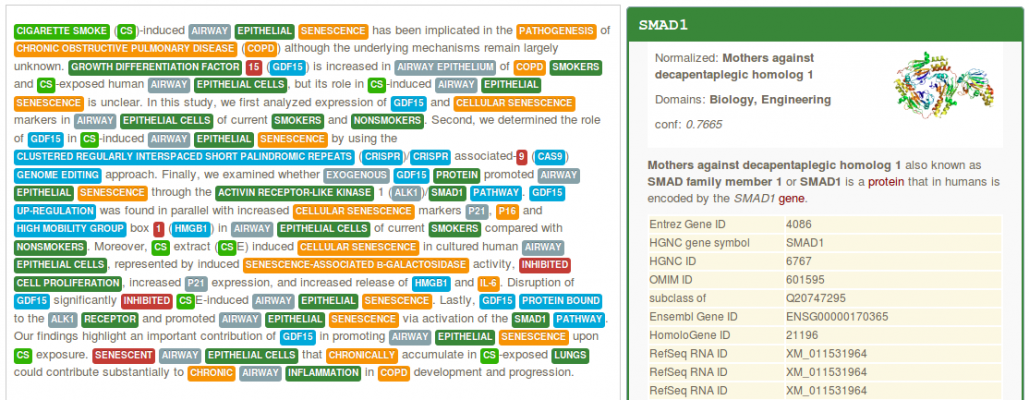

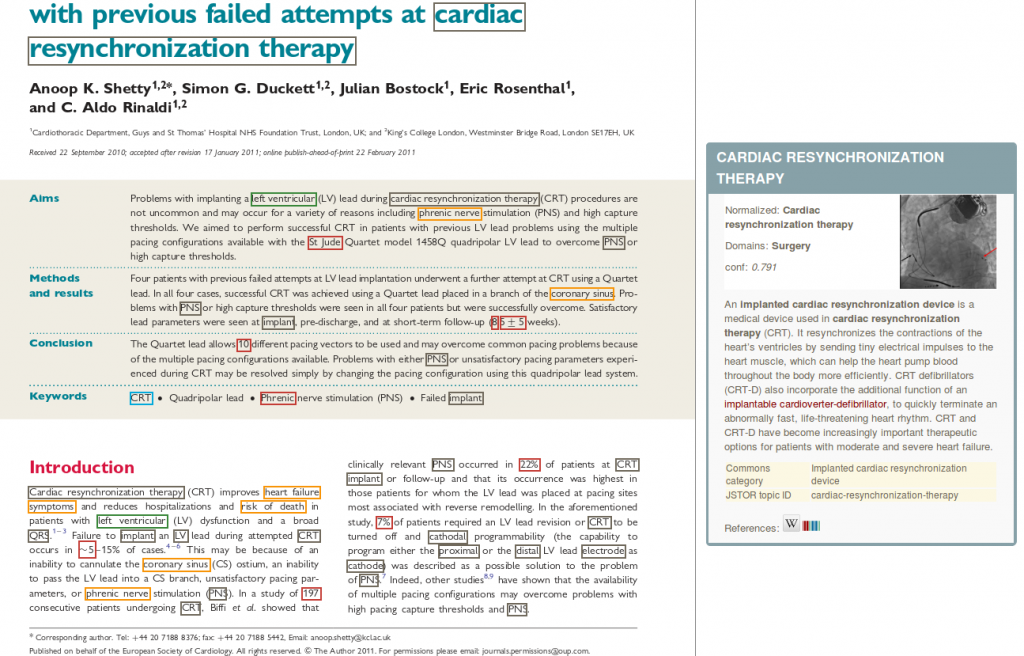

Browser-based PDF document annotation

The tool also performs of structure-aware annotations of PDF documents (with dynamic annotation layer over the PDF), thanks to the integration with GROBID.

Have a look at our presentation at WikiDataCon 2017 for some design and implementation descriptions.

Wikidata

Wikidata

With more than 84 million entities and 1 billion statements, Wikidata is by far the largest Open and reusable Knowledge Base today available.

It is also a remarkable hub of scientific knowledge, containing for instance half a million genes (including the whole human genome) or more than 18 million scientific article records. In addition, Wikidata is interfacing many authoritative large scales external scientific Knowledge Bases via external identifiers.

entity-fishing makes available this vast amount of knowledge in text, using specialized entity mention recognizers for scientific terms in addition to the whole Wikipedia vocabulary and disambiguation techniques exploiting the available context where the mentions occur.